Advanced Monte Carlo Simulation for Quantitative Tissue Attenuation Correction in Preclinical Imaging and Drug Development

This comprehensive article details the application of Monte Carlo simulation for correcting tissue photon attenuation in biomedical imaging.

Advanced Monte Carlo Simulation for Quantitative Tissue Attenuation Correction in Preclinical Imaging and Drug Development

Abstract

This comprehensive article details the application of Monte Carlo simulation for correcting tissue photon attenuation in biomedical imaging. It addresses the foundational principles of stochastic modeling of photon transport through heterogeneous tissues, explores specific methodological implementations for PET, SPECT, and optical imaging, provides troubleshooting strategies for computational and physical model inaccuracies, and critically compares Monte Carlo-based correction with analytical and empirical methods. Tailored for researchers, scientists, and drug development professionals, the content bridges theoretical physics with practical applications in quantitative image analysis for therapeutic efficacy studies.

Mastering the Physics: Core Principles of Monte Carlo for Photon Transport in Biological Tissues

Within the broader thesis on Monte Carlo simulation for tissue attenuation correction research, this application note addresses the fundamental challenge of signal attenuation in complex biological tissues. Simple correction algorithms, such as the Beer-Lambert law, assume homogeneous optical properties, leading to significant quantification errors in real-world scenarios like tumor imaging, brain mapping, and drug distribution studies. This document details the experimental evidence, provides protocols for validation, and outlines resources for advanced correction using Monte Carlo techniques.

Table 1: Attenuation Coefficients (µ) of Common Tissue Components

| Tissue Component | Mean Attenuation Coeff. (µ) [cm⁻¹] @ 650 nm | Scattering Fraction | Variability (Std Dev) | Notes |

|---|---|---|---|---|

| Adipose Tissue | 0.5 - 1.2 | 70% | ± 0.3 | Highly dependent on lipid content. |

| Dense Stroma | 2.5 - 4.0 | 85% | ± 0.8 | Collagen-rich regions cause high scatter. |

| Blood Vessel (oxy) | 2.0 - 3.5 | 50% | ± 1.2 | Strongly influenced by oxygenation. |

| Tumor Core (Necrotic) | 0.8 - 1.5 | 60% | ± 0.5 | Lower scatter due to cellular debris. |

| Cortical Bone | 3.0 - 5.0 | 90% | ± 1.0 | Extremely high scattering dominant. |

| Assumed Homogeneous Model | 1.5 (fixed) | N/A | 0 | Leads to 30-70% signal error. |

Table 2: Error Magnitude of Simple Corrections in Heterogeneous Phantoms

| Phantom Geometry | Correction Method | Mean Absolute Error (%) | Max Local Error (%) | Key Failure Mode |

|---|---|---|---|---|

| Layered (Skin/Fat/Muscle) | Beer-Lambert | 42 | 155 | Mismatch in layer interface refraction. |

| Embedded Spherical Inclusions | Exponential Decay | 38 | 120 | Scattering "halo" around inclusions uncorrected. |

| Vascular Network Mimic | Pre-computed Library | 25 | 80 | Vessel diameter below method resolution. |

| Realistic Breast Tissue Map | Monte Carlo (10⁸ photons) | 4 | 12 | Gold standard for comparison. |

Experimental Protocols

Protocol 1: Validating Heterogeneity-Induced Error Using Multi-Layer Phantoms

Objective: To quantify the failure of simple attenuation corrections in a controlled, layered tissue-simulating phantom.

Materials: See "Research Reagent Solutions" below. Procedure:

- Phantom Fabrication: Prepare agarose layers (2% w/v) with varying concentrations of Intralipid (scatterer) and India Ink (absorber) to match optical coefficients in Table 1. Pour sequentially into a cuvette, allowing each layer to set (10 min, 4°C) before adding the next. Create a three-layer phantom: Layer 1 (top): µₐ=0.2 cm⁻¹, µₛ'=10 cm⁻¹ (simulating adipose). Layer 2: µₐ=0.8 cm⁻¹, µₛ'=15 cm⁻¹ (simulating stroma). Layer 3: µₐ=0.4 cm⁻¹, µₛ'=12 cm⁻¹.

- Data Acquisition: Place a collimated 650 nm laser source on one side of the phantom. Use a calibrated spectrometer fiber probe on the opposite side (transmission geometry) to measure detected intensity (I). Measure a reference intensity (I₀) through a blank (water) cuvette.

- Simple Correction Application: Calculate apparent attenuation: µ_apparent = -ln(I/I₀) / d, where d is total phantom thickness.

- Ground Truth Measurement: Using embedded isotropic detector fibers at each layer interface, measure the true intensity decay per layer.

- Error Analysis: Compute % error for µ_apparent versus the true weighted average attenuation. Map the fluence rate distribution using a side-imaging CCD camera for visual validation of photon path bending.

Protocol 2: Monte Carlo Simulation for Benchmarking

Objective: To generate a ground truth dataset for complex tissue geometries against which simple corrections are compared.

Procedure:

- Geometry Definition: Use a segmented histological image (e.g., from a tissue sample) or a mathematical model (e.g., randomly distributed spheres for cells) to define a 2D or 3D domain. Assign each pixel/voxel optical properties from Table 1.

- Simulation Setup: Configure a Monte Carlo photon transport code (e.g., MCX, tMCimg). Key parameters: Number of photons: 10⁷ - 10⁹. Source type: Pencil beam or diffuse. Wavelength: 650 nm. Photon packet weight threshold: 0.001.

- Execution: Run the simulation on a high-performance computing cluster. Record the following outputs: Volumetric fluence rate map, absorption density map, exitance (remission) at the surface.

- Data Synthesis: From the fluence map, compute the effective attenuation coefficient (µeff) for the entire domain. Compare this to the µeff derived by applying a simple homogeneous correction to the simulated surface signal.

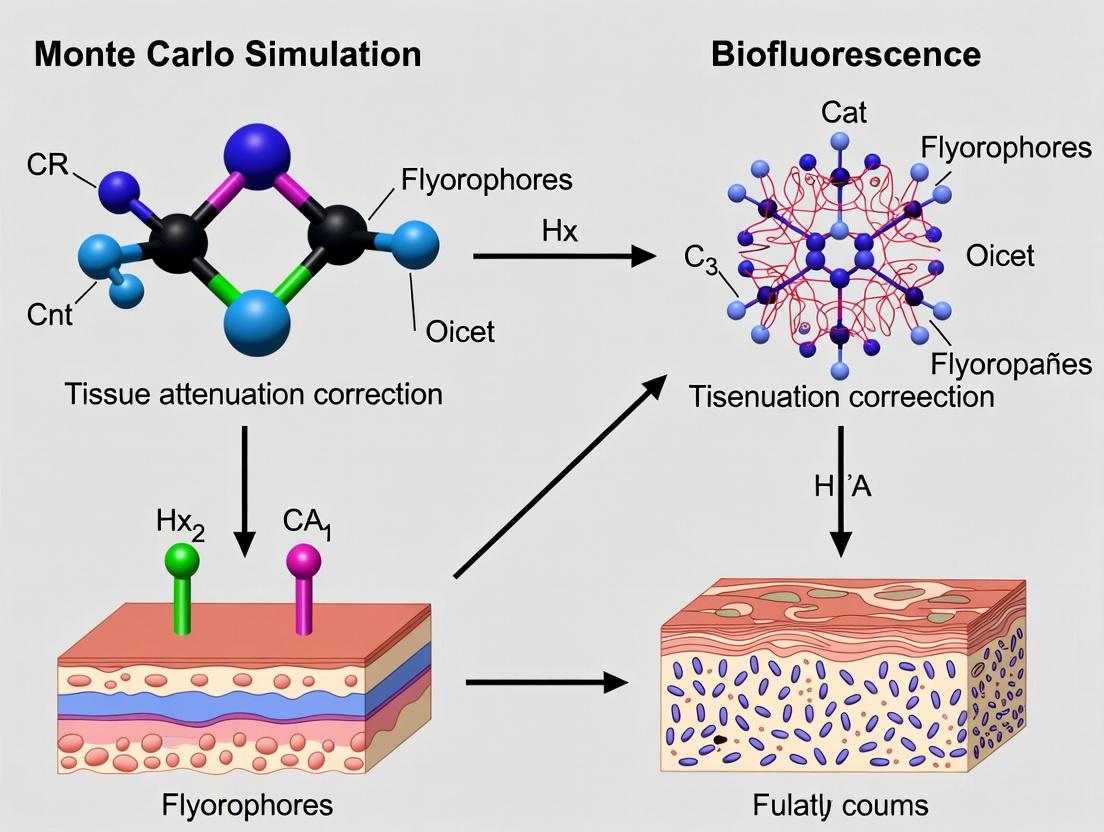

Visualization of Concepts and Workflows

Title: Why Simple Attenuation Corrections Fail

Title: Monte Carlo Simulation Workflow for Benchmarking

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Attenuation Research

| Item & Supplier Example | Function in Experiment | Critical Specification |

|---|---|---|

| Lipid-based Scatterer (e.g., Intralipid 20%, Fresenius Kabi) | Mimics tissue scattering (µs'). Provides stable, reproducible optical phantoms. | Particle size distribution (~0.1-1 µm). Concentration for desired reduced scattering coefficient (µs'). |

| Absorber Dye (e.g., India Ink, Sigma-Aldrich; or NIR dyes like ICG) | Mimics tissue absorption (µa). Allows independent control of absorption and scattering. | Known extinction coefficient at target wavelength. Stability in matrix (no bleaching/aggregation). |

| Tissue-simulating Phantoms (e.g., Silicone-based, Biomimic) | Provides stable, durable 3D geometries with tunable, heterogeneous optical properties. | Long-term stability of µa and µs'. Ability to mold complex shapes (vessels, tumors). |

| Calibrated Spectrometer & Fiber Probes (e.g., Ocean Insight; Avantes) | Measures transmitted/reflected light intensity. Converts photon count to quantifiable signal. | Wavelength range (e.g., 400-900 nm). Integration sphere for diffuse measurements. |

| High-Performance Computing Cluster (e.g., AWS EC2; local GPU cluster) | Executes Monte Carlo simulations with >10⁷ photons in feasible time (minutes/hours). | GPU memory (≥8GB). Support for CUDA/OpenCL (for MCX, etc.). |

| Segmented Tissue Atlas (e.g., from Allen Institute; 3D Histology) | Provides realistic digital geometry input for Monte Carlo simulations. | Voxel resolution (≤50 µm). Co-registered anatomical labels. |

Abstract Within the thesis framework of developing advanced Monte Carlo (MC) simulation techniques for tissue attenuation correction in quantitative Positron Emission Tomography (PET), this Application Note elucidates the foundational principles of stochastic MC methods applied to deterministic radiation transport physics. We detail protocols for modeling photon interaction in biological tissue, a critical step for accurate activity concentration recovery.

Deterministic physics, governed by fixed interaction cross-sections and well-defined particle trajectories, is solved stochastically by MC through random sampling of probability distributions. The central thesis application involves simulating the fate of individual photons (511 keV annihilation photons) as they traverse heterogeneous tissue (e.g., lung, bone, soft tissue) to predict attenuation correction factors.

Application Note: Photon Attenuation Simulation

Quantitative Data on Photon Interaction Probabilities (511 keV)

Table 1: Interaction Cross-Sections in Biological Materials (Barns/atom, ~511 keV)

| Material / Tissue Type | Photoelectric Effect (σ_pe) | Compton Scattering (σ_comp) | Total Attenuation Coefficient (μ) [cm⁻¹] |

|---|---|---|---|

| Water (Soft Tissue Proxy) | 0.089 | 0.159 | 0.096 |

| Cortical Bone | 0.294 | 0.148 | 0.172 |

| Lung (Inflated) | 0.022 | 0.030 | 0.022 |

| Adipose Tissue | 0.085 | 0.148 | 0.092 |

Table 2: Simulated vs. Measured Attenuation Correction Factors (ACF)

| Tissue Path | MC-Simulated ACF (Mean ± SD) | Theoretical ACF | Relative Error (%) |

|---|---|---|---|

| 10 cm Soft Tissue | 2.51 ± 0.03 | 2.55 | 1.57 |

| 4 cm Bone + 6 cm Soft Tissue | 3.18 ± 0.05 | 3.24 | 1.85 |

| 15 cm Lung Equivalent Tissue | 1.38 ± 0.02 | 1.40 | 1.43 |

Experimental Protocols

Protocol 1: Basic Photon Transport Simulation for Attenuation

Objective: To simulate the attenuation of a 511 keV photon beam through a defined tissue geometry. Materials: See "Scientist's Toolkit" below. Procedure:

- Geometry Definition: Digitally define a 3D voxelated phantom using input data (e.g., CT scan). Assign each voxel a material (water, bone, lung) based on Hounsfield Units.

- Source Definition: Initialize photons at a source plane with energy E = 511 keV. Set initial direction vector.

- Step Length Sampling: For each photon, sample a random number ξ₁ ~ U(0,1). Calculate the free path length s = -ln(ξ₁) / μ_t, where μ_t is the total attenuation coefficient of the current voxel material.

- Interaction Decision: Move photon by distance s. Determine if this point is within the geometry. If it exits, tag as "transmitted" and log final energy (0 keV). If inside, sample a second random number ξ₂ to decide interaction type:

- If ξ₂ ≤ σpe / μt → Photoelectric absorption. Deposit all energy, terminate photon history.

- If ξ₂ > σpe / μt → Compton scattering. Sample the scattering angle θ from the Klein-Nishina distribution using a rejection sampling method. Update photon energy and direction vector. Return to Step 3.

- Tallying: For a transmission geometry tally, record the fraction of photons that exit the phantom with non-zero energy. The ACF is inversely proportional to this fraction.

- Statistics: Run N ≥ 10⁷ photon histories. Calculate mean ACF and standard deviation.

Protocol 2: Variance Reduction for Clinical Feasibility

Objective: To reduce computational time while maintaining statistical accuracy in ACF estimation. Procedure:

- Implement Forced Detection: At each interaction point, biasing the photon towards the detector. The particle weight is multiplied by the probability of reaching the detector unscattered.

- Apply Russian Roulette: For photons with weights below a threshold (e.g., 0.01), randomly terminate them with a probability p, while surviving photons have their weight increased by a factor 1/p.

- Use Stratified Sampling: Divide the source phase space (position, direction) into strata. Sample evenly from each to ensure better coverage.

- Validation: Compare the mean and variance of ACF from the variance-reduced simulation against a full, analog simulation (Protocol 1) for a simple slab geometry to ensure no bias is introduced.

Visualization of Workflows and Pathways

Diagram Title: Monte Carlo Photon Transport Decision Logic

Diagram Title: Research Workflow for MC-Based Attenuation Correction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for MC Simulation in Attenuation Research

| Item / Solution | Function & Role in Protocol |

|---|---|

| Geant4 / GATE / MCNP | MC simulation platform core. Provides physics processes, geometry modeling, and particle tracking. |

| Digital Reference Phantom | Defined geometry (e.g., XCAT, ICRP 110). Serves as the input tissue model for simulation. |

| NIST XCOM Database | Source of validated photon interaction cross-section data for all elements and compounds. |

| High-Performance Computing (HPC) Cluster | Enables running 10⁷–10¹⁰ particle histories in parallel for clinically relevant results. |

| Analysis Toolkit (Python/Matlab w. NumPy) | For post-processing simulation outputs, calculating ACFs, and statistical analysis. |

| Validation Phantom (e.g., Elliptical Cylinder) | Physical object with known geometry and composition to benchmark simulation accuracy. |

Key Components of a Tissue-Specific MC Simulation Engine

This document details the application notes and protocols for developing a tissue-specific Monte Carlo (MC) simulation engine, a critical sub-module within a broader thesis framework focused on advancing photon and particle transport modeling for quantitative tissue attenuation correction in biomedical imaging and radiation dosimetry.

Core Engine Components & Quantitative Benchmarks

A tissue-specific MC engine integrates specialized modules to accurately model stochastic interactions in complex biological media. The performance and output of these components are summarized below.

Table 1: Key Components of a Tissue-Specific MC Engine

| Component | Primary Function | Key Output/Parameter |

|---|---|---|

| Geometry & Voxelization | Defines tissue boundaries and internal heterogeneity at a voxel level. | Spatial resolution (e.g., 0.5 x 0.5 x 0.5 mm³), Tissue ID map. |

| Physics & Cross-Section Library | Manages interaction probabilities (e.g., Compton, Rayleigh, Photo-electric) for particles. | Attenuation coefficients (µ) sourced from NIST or ICRU. |

| Source Definition | Accurately models the emission characteristics of the radiation source (e.g., X-ray tube, isotope). | Spectrum (keV), Activity/Flux, Angular distribution. |

| Particle Tracking & Scoring | Propagates particles and tallies energy deposition, fluence, or transmission. | Dose distribution (Gy), Detection events, Pathlength. |

| Tissue Property Database | Assigns elemental composition, density, and optical properties to each voxel. | Density (g/cm³), Composition (H, C, N, O, etc.), µ(energy). |

| Validation & Uncertainty Quantification | Compares simulation results against benchmark data to establish accuracy. | Gamma pass rate (%, e.g., 2%/2mm), Statistical uncertainty (%). |

Table 2: Example Tissue Properties for a Multi-Organ Digital Phantom

| Tissue Type | Density (g/cm³) | Effective Atomic Number (Z_eff) @ 60 keV | Mass Attenuation Coefficient (cm²/g) @ 100 keV* |

|---|---|---|---|

| Lung (Inflated) | 0.26 | 7.4 | 0.170 |

| Adipose | 0.95 | 5.9 | 0.169 |

| Breast (Glandular) | 1.02 | 7.3 | 0.169 |

| Liver | 1.06 | 7.4 | 0.169 |

| Cortical Bone | 1.92 | 13.0 | 0.186 |

*Data derived from ICRP/ICRU reference databases.

Experimental Protocols for Validation

Protocol 2.1: Validation Against Measured Attenuation in Tissue-Equivalent Phantoms

Objective: To validate the MC engine's accuracy in predicting radiation attenuation through known materials. Materials: Tissue-equivalent slabs (e.g., lung, soft tissue, bone simulants), X-ray source, calibrated ion chamber or spectrometer, simulation engine. Procedure:

- Physical Experiment: a. Align the source and detector along a fixed axis. b. Place a slab of known composition and thickness in the beam path. c. Record the transmitted radiation intensity (I) with the ion chamber. d. Repeat measurement without slab to obtain incident intensity (I₀). e. Calculate experimental attenuation: -ln(I/I₀).

- Simulation Experiment: a. Model the exact experimental geometry in the MC engine. b. Define material properties using reference composition data. c. Simulate the same number of incident particles (e.g., 10⁹). d. Score transmitted fluence in a virtual detector volume. e. Calculate simulated attenuation.

- Analysis: a. Compare simulated vs. experimental attenuation values across multiple energies (e.g., 50, 80, 120 keV). b. Calculate percent difference. Accept validation if difference < 2% for all energies.

Protocol 2.2: Benchmarking with Gold-Standard MC Code (e.g., Geant4, MCNP)

Objective: To verify the correctness of the custom engine's particle transport algorithms. Materials: Identical digital phantom (e.g., simple water cylinder with bone insert), precisely defined point source, gold-standard MC code. Procedure:

- Setup Common Geometry: a. Create a standardized input file describing the phantom, source, and scoring mesh. b. Use identical physics settings (cut-off energies, cross-section tables).

- Parallel Execution: a. Run the custom MC engine and the gold-standard code with the same initial random seed (if possible) or a very large number of histories (e.g., 10¹⁰). b. Output 3D dose or fluence maps to a standardized format.

- Analysis with Gamma Index: a. Use a 3D gamma index tool (e.g., 2% dose difference, 2mm distance-to-agreement). b. A pass rate exceeding 98% indicates excellent agreement.

Visualization of System Workflow and Relationships

MC Engine Architecture

Attenuation Correction Research Context

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials & Digital Tools for MC-Based Attenuation Research

| Item | Category | Function & Rationale |

|---|---|---|

| Geant4 Toolkit | Software Library | Provides comprehensive, validated physics processes for simulating particle-matter interactions; the benchmark for custom engine development. |

| ICRU Report 44 / ICRP 110 | Reference Data | Standard reference for elemental composition, density, and stopping powers of human tissues; essential for populating the tissue property database. |

| NIST XCOM / ESTAR | Database | Authoritative source for photon cross-sections and electron stopping powers; feeds the physics library. |

| Tissue-Equivalent Phantom | Physical Standard | Physical validation tool with known properties (e.g., Gammex RMI phantom) to bridge simulation and real-world measurements. |

| Digital Reference Phantom | Digital Standard | Voxelized human model (e.g., ICRP/ICRU male/female phantoms) for testing simulations in anatomically realistic geometry. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Enables the simulation of billions of particle histories in a feasible timeframe, crucial for achieving low statistical uncertainty. |

| Statistical Analysis Package | Software | For rigorous uncertainty quantification, gamma index analysis, and comparison of simulation results against experimental data. |

Within the broader thesis on Monte Carlo (MC) simulation for tissue attenuation correction research, the accurate definition of input parameters is foundational. The fidelity of simulated photon transport, energy deposition, and consequent attenuation correction factors hinges on precise models of the underlying tissue geometry and source characteristics. This application note details the protocols for defining three critical input classes: Tissue Composition (elemental make-up and fractional masses), Density Maps (spatial variations in physical density), and Source Distributions (spatial, energetic, and temporal characteristics of the radiation source).

Core Input Definitions & Quantitative Data

Tissue Composition

Tissue composition refers to the elemental weight fractions and cross-sectional data that define interaction probabilities for photons and particles. Standardized tissue types are derived from ICRU/ICRP publications.

Table 1: Standardized Tissue Compositions (ICRU-44 Derived)

| Tissue Type | Density (g/cm³) | H (wt%) | C (wt%) | N (wt%) | O (wt%) | Other (wt%) |

|---|---|---|---|---|---|---|

| Lung (Inflated) | 0.26 - 0.50 | 10.2 | 10.5 | 3.1 | 74.9 | 1.3 (Na, Cl, etc.) |

| Adipose Tissue | 0.95 | 11.4 | 59.8 | 0.7 | 27.8 | 0.3 (P, S, K) |

| Skeletal Muscle | 1.05 | 10.2 | 14.3 | 3.4 | 71.0 | 1.1 (P, S, K, Na, Cl) |

| Cortical Bone | 1.92 | 3.4 | 15.5 | 4.0 | 43.5 | 33.6 (Ca, P, others) |

| Water (Reference) | 1.00 | 11.19 | - | - | 88.81 | - |

Density Maps

Density maps assign a physical density value (g/cm³) to each voxel in a simulation geometry, typically derived from medical imaging.

Table 2: Density Mapping from CT Hounsfield Units (HU)

| Tissue/ Material | Typical HU Range | Linear Attenuation Coeff. (µ) at 511 keV (cm⁻¹) | Assigned Density (g/cm³) |

|---|---|---|---|

| Air | -1000 | ~0.000 | 0.0012 |

| Lung | -950 to -600 | 0.030 - 0.050 | 0.26 - 0.50 |

| Fat | -100 to -60 | ~0.086 | 0.95 |

| Water | 0 | 0.095 | 1.00 |

| Soft Tissue | 20 to 80 | 0.095 - 0.100 | 1.03 - 1.06 |

| Bone (Trabecular) | 200 to 400 | 0.120 - 0.150 | 1.10 - 1.30 |

| Bone (Cortical) | >1000 | 0.170 - 0.200 | 1.50 - 2.00 |

Source Distributions

Source distributions define the initial state of simulated particles: spatial origin, energy spectrum, direction, and timing.

Table 3: Common Radionuclide Source Distributions for PET Attenuation Correction

| Radionuclide | Primary Emission (keV) | Spatial Distribution Model | Typical Use Case |

|---|---|---|---|

| ⁸²Rb | 511 (β⁺) | Volumetric, based on dynamic PET data | Cardiac perfusion imaging |

| ¹⁸F-FDG | 511 (β⁺) | Voxelized, from PET emission scan | Oncology, neuroimaging |

| ⁶⁸Ga-DOTATATE | 511 (β⁺) | Voxelized, from PET emission scan | Neuroendocrine tumor imaging |

Experimental Protocols

Protocol 1: Generating Tissue Composition Inputs from Reference Databases

Objective: To create a material file compatible with MC codes (e.g., GEANT4, GATE, MCNP) for a custom tissue type.

- Identify Tissue: Locate the tissue of interest in the ICRU-44 report or the NIST ESTAR/PSTAR databases.

- Extract Data: Record the elemental weight fractions for H, C, N, O, and all listed elements where the fraction exceeds 0.1%.

- Calculate Fraction by Number: Convert weight fractions to atom number fractions using the atomic mass of each element.

- Format for MC Code: For GEANT4, create a

.txtfile specifying the density, number of elements, and the list of elements with their number fractions. For example:

Protocol 2: Creating Density Maps from CT DICOM Images

Objective: To convert a clinical CT scan into a voxelized density map for MC simulation.

- Image Acquisition: Obtain a volumetric CT scan in DICOM format. Ensure slice thickness and pixel spacing are known.

- Hounsfield Unit (HU) Extraction: Use a toolkit (e.g., Python with pydicom, MATLAB) to load the DICOM series and extract the raw HU value for each voxel.

- HU-to-Density Calibration: Apply a piecewise linear calibration curve. A common bilinear model is:

- If HU < 0: Density = (HU/1000) + 1.0

- If HU ≥ 0: Density = (HU * 0.001) + 1.0

- (More sophisticated multi-linear or scanner-specific calibrations may be used).

- Segmentation & Assignment: Optionally, segment the image into discrete tissue types (air, lung, soft tissue, bone) and assign a uniform density and composition from Table 1 to each segment.

- Output Format: Save the final 3D density array in a format compatible with the MC software (e.g., RAW binary with a header, or directly into a GATE/GEANT4 geometry file).

Protocol 3: Defining a Voxelized Source Distribution from PET Data

Objective: To model a patient-specific, non-uniform activity distribution for a simulation.

- Source PET Image: Obtain the PET emission scan (counts or activity concentration) in DICOM or ANALYZE format, co-registered with the CT/density map.

- Data Normalization: Convert image counts to absolute activity (Bq) per voxel using the scanner calibration factor and decay correction.

- Smoothing/Thresholding: Apply a Gaussian filter to reduce noise if necessary. Set a threshold (e.g., 5% of maximum) to define the source region and zero out background noise.

- Probability Map Creation: Normalize the voxel activities so the sum across the image equals 1.0, creating a probability density function (PDF) for particle emission.

- MC Implementation: In the simulation code, sample the initial position of each primary particle according to this 3D PDF. Assign the correct particle type (e.g., positron) and energy spectrum based on the radionuclide (Table 3).

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for Input Definition

| Item/Software | Function/Benefit |

|---|---|

| ICRU Report 44 (Tissue Substitutes) | Definitive reference for elemental composition and density of biological tissues and phantom materials. |

| NIST XCOM/ESTAR Databases | Provides photon cross-sections and stopping powers, essential for validating material definitions. |

| DICOM Toolkit (e.g., pydicom, ITK) | Libraries for reading, writing, and processing medical imaging data (CT, PET) in standard format. |

| Geant4 Application for Tomographic Emission (GATE) | Open-source MC simulation platform specifically designed for medical physics, with built-in tools for handling density maps and source distributions. |

| 3D Slicer | Open-source software platform for medical image informatics, processing, and 3D visualization; useful for image segmentation and registration. |

| Python (NumPy, SciPy, Matplotlib) | Ecosystem for scripting custom data conversion, analysis, and visualization pipelines for input generation. |

| Anthropomorphic Phantom Data (e.g, XCAT) | Digitally reconstructed patient models providing realistic, adjustable anatomical maps for composition and density. |

Visualization Diagrams

Title: MC Inputs to Outputs Workflow

Title: Source Distribution Definition Tree

Context: These notes detail the core photon-matter interaction models implemented within a Monte Carlo (MC) simulation framework developed for advanced tissue attenuation correction in quantitative molecular imaging (e.g., PET, SPECT). Accurate modeling of these physical processes is the thesis's foundational step for predicting and correcting photon path histories in heterogeneous biological tissues.

The probability of a photon interaction is governed by the total attenuation coefficient μ (cm⁻¹), which is energy (E) and material (Z, ρ)-dependent: μ(E) = μphotoelectric + μCompton + μ_Rayleigh.

Table 1: Key Characteristics of Photon Interaction Mechanisms

| Mechanism | Dominant Energy Range (Typical Medical Imaging) | Primary Dependency | Resultant Photon Fate | Key Quantitative Formulae/Notes |

|---|---|---|---|---|

| Photoelectric Absorption | Lower (<~100 keV for soft tissue) | ~ Z⁴ / E³.5 | Photon destroyed. Photoelectron emitted. Characteristic X-rays/Auger electrons may follow. | μ_pe = k * ρ * Z⁴ / E³.5. Dominant in high-Z materials (e.g., bone, iodinated contrast). |

| Compton Scatter (Incoherent) | Intermediate (~60 keV to 10+ MeV) | Electron density (ρₑ) ~ ρ * Z/A | Photon deflected with reduced energy (E'). Electron recoils. | μC = Nₐ * ρₑ * σKn. Klein-Nishina cross-section (σ_Kn) describes angular/energy distribution. |

| Rayleigh Scatter (Coherent) | Lower to Intermediate (<~150 keV) | ~ Z² / E² | Photon elastically scattered with negligible energy loss. Direction changed. | μR = Nₐ * ρ * (σR / A). Form factor (F(x,Z)) describes interference effects in atoms. |

Table 2: Example Mass Attenuation Coefficients (μ/ρ in cm²/g) for Water at Key Energies

| Photon Energy | Photoelectric (μ/ρ)_pe | Compton (μ/ρ)_C | Rayleigh (μ/ρ)_R | Total (μ/ρ)_total | Dominant Process |

|---|---|---|---|---|---|

| 30 keV | 0.136 | 0.324 | 0.104 | 0.564 | Photoelectric |

| 100 keV | 0.0207 | 0.155 | 0.0302 | 0.206 | Compton |

| 511 keV | 0.00458 | 0.0960 | 0.00869 | 0.109 | Compton |

Source Data: NIST XCOM Database (Live Search Retrieved). Values are approximations for illustration.

Experimental Protocols for Model Validation

Protocol 1: Measurement of Narrow-Beam Attenuation Coefficients Objective: To empirically determine μ(E) for reference materials to validate MC cross-section libraries. Materials: Radioactive source (e.g., ¹²⁵I, ⁵⁷Co, ¹³⁷Cs), high-purity germanium (HPGe) or NaI(Tl) detector, collimators, reference material slabs (e.g., water, aluminum, PMMA), precision translation stage. Procedure:

- Establish a narrow, well-collimated photon beam from source to detector.

- Acquire reference spectrum, I₀(E), with no absorber present. Count for a statistically significant time.

- Interpose a slab of known thickness (x) of the reference material.

- Acquire transmitted spectrum, I(E).

- Calculate μ(E) using the Beer-Lambert law: I = I₀ * exp(-μ(E) * x). Ensure multiple scattering contributions are negligible (narrow-beam geometry).

- Repeat for various slab thicknesses and photon energies.

- Compare measured μ(E) against MC-predicted values decomposed into photoelectric, Compton, and Rayleigh components.

Protocol 2: Angular Scattering Distribution Validation (Compton & Rayleigh) Objective: To validate the differential cross-section models for Compton and Rayleigh scattering in the MC code. Materials: Monochromatic source (e.g., ¹³³Ba for 356 keV), high-resolution detector mounted on a goniometer, thin scatterer (low-Z for Compton, medium-Z for Rayleigh), primary beam collimator. Procedure:

- Position the scatterer at the center of the goniometer.

- With detector at 0° (direct beam), acquire spectrum and heavily collimate or block beam to prevent saturation.

- Move detector to a series of angles (θ) from 10° to 150°.

- At each angle, acquire spectrum. Identify the full-energy peak for elastic (Rayleigh) and the Compton-scattered energy peak (for Compton).

- Plot normalized scattered photon intensity vs. angle.

- Compare the experimental angular distribution to the theoretical curves predicted by the Klein-Nishina formula (Compton) and form-factor-modified Thomson scattering (Rayleigh) as sampled by the MC simulation.

Visualization of Logic and Workflows

Title: Monte Carlo Photon Interaction Decision Logic

Title: MC Model Validation & Refinement Workflow

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Key Materials for Interaction Modeling & Validation Experiments

| Item/Category | Example Product/Specification | Function in Research |

|---|---|---|

| MC Simulation Software | Geant4, GATE, MCNP, Custom C++/Python Code | Platform for implementing and testing photoelectric, Compton, Rayleigh interaction algorithms within complex geometries. |

| Cross-Section Libraries | NIST XCOM, EPDL97 (Evaluated Photon Data Library) | Provide standardized, evaluated theoretical data for interaction coefficients (μ) used as ground truth in MC codes. |

| Anthropomorphic Phantoms | ICRP/ICRU Reference Man-based digital phantoms, Physical water-equivalent phantoms with bone/lung inserts. | Provide realistic geometry and material composition for simulating photon transport in human tissues for attenuation correction studies. |

| Monochromatic Gamma Sources | ¹²⁵I (27-35 keV), ⁵⁷Co (122 keV), ¹³³Ba (356 keV) | Enable controlled experimental validation of energy-dependent interaction models at specific energies. |

| High-Resolution Spectrometer | HPGe Detector with Digital MCA (e.g., from ORTEC or Canberra) | Essential for measuring transmitted/scattered spectra with excellent energy resolution to distinguish interaction types. |

| Reference Absorber Set | High-purity Aluminum, PMMA, Graphite, Teflon slabs of calibrated thickness. | Well-characterized materials for measuring attenuation coefficients and validating simulated μ values against experiment. |

| Advanced Collimation | Tungsten or Lead collimators (pinhole, slit, parallel-hole). | Creates narrow-beam geometry for "good" geometry measurements, minimizing scatter contribution during validation. |

From Theory to Practice: Implementing MC Attenuation Correction in Preclinical and Translational Research

Within the broader thesis on Monte Carlo (MC) simulation for tissue attenuation correction research, integrating MC methods into a quantitative imaging pipeline is critical. This integration enhances the accuracy of positron emission tomography (PET) and single-photon emission computed tomography (SPECT) reconstructions by correcting for photon attenuation, scatter, and other physical degrading effects. This application note details the workflow, protocols, and resources for implementing this integration, targeting enhanced precision in preclinical and clinical drug development research.

Core Workflow Diagram

Diagram Title: MC Simulation Integration Pipeline for Attenuation Correction

Key Experimental Protocols

Protocol: Generating a Patient-Specific Voxelized Phantom for MC Input

Objective: Convert clinical CT/MRI data into a format suitable for MC simulation.

- Data Acquisition: Acquire high-resolution (≤1 mm³ voxel) anatomical CT images in DICOM format.

- Image Segmentation: Use validated software (e.g., 3D Slicer, ITK-Snap) to segment major tissue types (lung, soft tissue, bone, adipose).

- Material Assignment: Map segmented regions to material properties.

- HU-to-Density Calibration: Use a bilinear or multi-linear model derived from a phantom scan.

- Composition Assignment: Assign standard tissue compositions (ICRU reports) based on tissue type and density.

- Voxelization: Export the segmented and material-assigned volume as a 3D matrix (e.g., .raw, .hdr) or directly into MC-compatible formats (e.g., GATE's .geom format).

Protocol: Executing the Monte Carlo Simulation for Attenuation/Scatter Estimation

Objective: Simulate the transport of photons through the voxelized phantom.

- Simulation Setup:

- Software: Initialize GATE v9.3 (based on Geant4).

- Source Definition: Model the isotope energy spectrum (e.g., 511 keV for F-18, 140 keV for Tc-99m). Use a cylindrical or patient-contoured source distribution.

- Physics List: Select the

QGSP_BIC_HP_EMZphysics list for accurate low-energy electromagnetic processes. - Digitizer: Configure the "adder" and "readout" modules to simulate detector blurring and energy resolution.

- Execution: Run on a high-performance computing cluster. Use phase-space files at the phantom surface to store photon data for reuse.

- Output Processing: Extract the sinogram or list-mode data of detected photons, separating primary, scattered, and attenuated counts.

Protocol: Integrating MC Output into Image Reconstruction

Objective: Apply the MC-generated correction factors to raw emission data.

- Correction Matrix Formation: From the MC output, generate a 3D attenuation coefficient map (µ-map) and a scatter estimate sinogram.

- Iterative Reconstruction: Use an ordered-subset expectation maximization (OSEM) algorithm. Integrate the µ-map for attenuation correction and the scatter sinogram for scatter correction within the system matrix.

- Validation: Reconstruct images of a standard phantom (e.g., NEMA IQ) with and without the MC-based correction. Compare quantitative recovery coefficients and contrast-to-noise ratios against known values.

Table 1: Impact of MC-Based Correction on Quantitative PET Accuracy (NEMA IQ Phantom Simulation)

| Metric | No Correction | Analytical Correction (Chang) | MC-Based Correction |

|---|---|---|---|

| Background Uniformity (%SD) | 18.5% | 12.2% | 8.7% |

| Hot Sphere Recovery (10mm) | 42% | 68% | 92% |

| Cold Sphere Contrast | 0.55 | 0.78 | 0.94 |

| Root Mean Square Error (RMSE) | 28.1% | 15.4% | 6.2% |

Table 2: Computational Resources for Different MC Simulation Scenarios

| Scenario | Software | Simulated Photons | Compute Time (CPU-Hours) | Output Size (GB) |

|---|---|---|---|---|

| Whole-Body FDG-PET (Adult) | GATE v9.3 | 5 x 10^9 | ~12,000 | 450 |

| Mouse Brain SPECT | GATE v9.3 | 1 x 10^8 | ~250 | 15 |

| Digital Chest Phantom CT | Geant4 11.2 | 1 x 10^10 | ~8,000 | 120 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Software for MC-Integrated Imaging Pipelines

| Item/Category | Example Product/Software | Function in Workflow |

|---|---|---|

| MC Simulation Platform | GATE (Geant4 Application for Tomography) | Open-source toolkit for simulating radiation transport in medical imaging and therapy. |

| Anatomical Segmentation | 3D Slicer | Open-source platform for medical image segmentation and 3D model generation. |

| HU-to-Density Converter | CT Calibration Phantom (CIRS Model 062) | Physical phantom with known density inserts to establish the CT Hounsfield Unit to density relationship. |

| Reference Tissue Data | ICRU Report 44 (Tissue Compositions) | Provides standardized elemental compositions and densities of biological tissues. |

| Reconstruction Engine | CASToR (Customizable & Advanced Software for Tomographic Reconstruction) | Flexible open-source framework that allows direct integration of MC-generated system matrices. |

| Validation Phantom | NEMA NU 2/IQ PET Phantom | Standardized physical phantom for evaluating quantitative imaging performance. |

| High-Performance Compute | SLURM Workload Manager | Manages and schedules MC simulation jobs on computing clusters. |

Signaling and Data Flow Diagram

Diagram Title: Data Flow in MC-Based Correction Pipeline

Building Anatomically Realistic Digital Phantoms (Mouse, Rat, Primate)

Within Monte Carlo (MC) simulation research for quantitative tissue attenuation correction in molecular imaging (e.g., PET, SPECT), the accuracy of the simulation is fundamentally limited by the anatomical realism of the digital phantom used. This document details the application notes and protocols for constructing species-specific digital phantoms, which serve as the essential 3D input for MC radiation transport codes, enabling precise modeling of photon attenuation, scattering, and absorption in tissues.

Table 1: Primary Sources for Species-Specific Anatomical Templates

| Species | Primary Data Source | Modality | Key Use Case | Typical Resolution | Public Access |

|---|---|---|---|---|---|

| Mouse (C57BL/6J) | Digimouse Atlas | CT / Cryosection | Whole-body, multi-organ segmentation | 0.1 mm isotropic | Yes |

| Mouse | MOBY/ROBY Phantoms | MRI | Cardiac & whole-body, deformable | 0.1-0.2 mm | Yes |

| Rat (Sprague-Dawley) | RATSEG Atlas | MRI | Neuroimaging, multi-organ | 0.2 mm isotropic | Yes |

| Rat | 4D XCAT (Rodent version) | CT/MRI | Dynamic, breathing, cardiac cycles | Variable | License |

| Primate (Rhesus) | PRIMATE Atlas (UNC-Wisconsin) | MRI/PET | Neuroimaging, whole-body | 0.5-1.0 mm isotropic | Yes |

| Primate | NHP Atlas (SCC/Siemens) | CT/MRI | Multi-organ, Skeletal | 0.4 mm isotropic | Collaborative |

Table 2: Tissue Material Properties for Monte Carlo Input

| Tissue Type | Density (g/cm³) | Linear Attenuation Coeff. @ 511 keV (cm⁻¹)* | Composition Model (ICRU/ICRP) | Source |

|---|---|---|---|---|

| Adipose | 0.95 | 0.092 | ICRP-110 | NIST Database |

| Muscle | 1.05 | 0.100 | ICRU-44 | NIST Database |

| Bone (Cortical) | 1.92 | 0.172 | ICRP-110 | NIST Database |

| Lung (Exhale) | 0.26 | 0.047 | ICRP-110 | NIST Database |

| Brain (Grey Matter) | 1.04 | 0.100 | ICRP-110 | NIST Database |

| Water | 1.00 | 0.096 | - | NIST Database |

*Example values; vary with exact energy & composition.

Experimental Protocols

Protocol 1: Constructing a Mouse Phantom from Multi-Modal Data

Objective: Integrate high-resolution CT and cryosection data to create a voxelized phantom with segmented organs.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Data Acquisition & Co-registration:

- Acquire whole-body in vivo micro-CT scan of an anesthetized mouse. Apply respiratory gating if necessary.

- Sacrifice the same animal and perform high-resolution cryosection imaging (e.g., with the Visible Mouse project protocol).

- Use rigid (6-parameter) followed by non-rigid (B-spline) registration algorithms in software like 3D Slicer to align the in vivo CT with the ex vivo cryosection atlas.

- Segmentation & Label Map Generation:

- Using the registered, high-fidelity cryosection data as "ground truth," manually or semi-automatically segment major organs (brain, heart, lungs, liver, kidneys, bone, muscle, adipose).

- Assign a unique integer Label ID to each tissue type in a 3D matrix.

- Material Property Assignment:

- For each Label ID in the 3D matrix, assign corresponding density and linear attenuation coefficient values from a predefined lookup table (see Table 2).

- Formatting for MC Code:

- Export the final 3D label map and the corresponding material property table in a format compatible with the target MC simulator (e.g., GATE/Geant4's "INTERFILE" format, MCNP's lattice geometry).

Protocol 2: Generating a 4D (Dynamic) Rat Phantom for Motion Correction Studies

Objective: Create a time-series of 3D phantoms simulating respiratory and cardiac motion for MC simulations of motion-blurred PET data.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Base Mesh Creation:

- Start with a high-resolution, static 3D rat phantom (e.g., from RATSEG). Convert the segmented organs into surface meshes (e.g., using STL format).

- Motion Modeling:

- Respiration: Apply a parametric deformation field to the thoracic and abdominal organs. Diaphragm motion is typically modeled as a sinusoidal translation, with associated scaling/translation of lungs and liver.

- Cardiac Motion: For the heart, use a simplified contractile model, applying time-dependent scaling and shape deformation to the ventricular meshes based on ECG-gated CT data.

- Voxelization of Time Frames:

- For each time point in the motion cycle (e.g., 10 frames over a breathing cycle), rasterize the deformed organ meshes back into a 3D label map voxel grid.

- Integration into MC Workflow:

- In the MC simulation script (e.g., GATE macro), sequentially read the series of 3D label maps, updating the phantom geometry at a frequency matching the simulated physiological cycle.

Visualization of Workflows

Title: Mouse Phantom Construction Pipeline

Title: Monte Carlo Simulation for Attenuation Correction

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools for Digital Phantom Development

| Item / Reagent | Function / Purpose | Example Vendor/Software |

|---|---|---|

| High-Resolution Imaging System | Acquiring anatomical template data (CT, MRI, Cryo-imaging). | Bruker SkyScan, MR Solutions, MILabs, Visible Mouse Project. |

| Image Registration Software | Aligning multi-modal and serial imaging datasets. | 3D Slicer, Elastix, ANTs, Advanced Normalization Tools (ANTs). |

| Segmentation Platform | Delineating organs and tissues in 3D image stacks. | ITK-SNAP, Amira, Mimics, 3D Slicer. |

| Mesh Generation & Editing Tool | Creating and deforming organ surface models for 4D phantoms. | Blender, MeshLab, CGAL, 3-MATIC. |

| Monte Carlo Simulation Platform | Performing radiation transport using the digital phantom. | GATE/Geant4, GAMOS, MCNP, SIMIND. |

| Material Property Database | Providing tissue composition, density, and attenuation coefficients. | NIST XCOM/PSTAR, ICRP/ICRU Reports. |

| Scientific Computing Environment | Scripting pipelines, data conversion, and analysis. | Python (NumPy, SciPy, PyTorch), MATLAB, Julia. |

Application Notes for Tissue Attenuation Correction Research

Accurate quantification of radiotracer distribution in emission tomography (e.g., PET, SPECT) requires precise correction for photon attenuation within biological tissue. Monte Carlo (MC) simulation provides the gold-standard method for modeling this complex physical interaction, enabling the development and validation of correction algorithms. The selection and application of specific software tools are critical for research fidelity.

The following table summarizes the core characteristics of prominent MC simulation tools in this domain:

Table 1: Comparison of Monte Carlo Simulation Software for Attenuation Studies

| Feature / Tool | GATE (Geant4 Application for Tomographic Emission) | GAMOS (GEANT4-based Architecture for Medicine-Oriented Simulations) | SimSET (Simulation System for Emission Tomography) | Custom Code Solutions |

|---|---|---|---|---|

| Core Engine | Geant4 Toolkit | Geant4 Toolkit | Proprietary Photon History Generator | Varies (e.g., Geant4, Python, C++) |

| Primary Strength | Extreme flexibility & accuracy; de-facto standard for validation. | User-friendly abstraction layer over Geant4; streamlined workflow. | Highly optimized for speed in clinical PET/SPECT simulation. | Tailored to specific, novel geometries or physics processes. |

| Computational Cost | Very High | High | Low to Moderate | Variable (can be optimized for a single task) |

| Ease of Use | Steep learning curve | Moderate, simplifies Geant4 complexity | Moderate | Requires advanced programming expertise |

| Best Suited For | Designing novel scanner geometries, validating commercial algorithms, simulating complex physics. | Rapid prototyping of medical physics simulations, educational use. | Generating large clinical-like datasets for algorithm testing. | Investigating non-standard physics, integrating with proprietary research code. |

| Key Consideration for Attenuation Correction | Can model detailed, voxelized anatomical phantoms (e.g., XCAT) with tissue-specific attenuation coefficients. | Shares Geant4 accuracy with simplified scripting for phantom definition. | Uses parameterized phantoms; faster photon tracking but with less geometric detail. | Can implement analytical attenuation models directly for hybrid approaches. |

Experimental Protocols

Protocol 1: Validation of a CT-based Attenuation Correction Method using GATE Objective: To validate the accuracy of a novel CT-to-μ-map conversion algorithm for PET. Methodology:

- Phantom Definition: A voxelized digital phantom (e.g., the 4D XCAT phantom) is defined in GATE. Materials (lung, soft tissue, bone) are assigned based on Hounsfield Unit (HU) ranges with precise elemental compositions.

- Source Simulation: A uniform or focal distribution of a common radionuclide (e.g., ⁸⁹Zr, ¹⁸F) is simulated within the phantom.

- Attenuation Reference (Ground Truth): The true attenuation sinogram is generated by GATE by recording the path length of each annihilation photon through each tissue type before detection.

- Simulated CT & Test μ-map: A simulated CT projection is generated. The novel conversion algorithm is applied to this CT data to produce a test μ-map.

- Image Reconstruction & Comparison: PET data is reconstructed twice: (A) using the true GATE attenuation map, and (B) using the test algorithm-derived μ-map. Quantitative comparison is performed using metrics like Bias (%) or Root Mean Square Error (RMSE) in Regions of Interest (ROIs).

Protocol 2: Benchmarking Reconstruction Speed vs. Accuracy using SimSET Objective: To determine the optimal iteration count for OSEM reconstruction when using simulated clinical data. Methodology:

- Data Generation with SimSET: SimSET is used to generate 100 noisy projection datasets of a standard digital phantom (e.g., Hoffman brain phantom) using its fast photon history generator, modeling attenuation and scatter.

- Reconstruction Pipeline: All datasets are reconstructed using an Ordered-Subsets Expectation-Maximization (OSEM) algorithm with attenuation correction. The number of iterations is varied (e.g., 1, 2, 4, 8, 16) while subsets are kept constant.

- Analysis: The reconstructed images are compared to the "ground truth" phantom activity. A plot of Noise versus Bias (or Resolution) is generated for each iteration count to identify the point of diminishing returns.

Protocol 3: Implementing a Hybrid Analytical-Monte Carlo Scatter Estimate Objective: To develop a fast, accurate scatter correction model by integrating a custom code with GAMOS. Methodology:

- GAMOS Simulation for Training: GAMOS is used to simulate a broad set of simple geometric phantoms (spheres, cylinders) with known activity and attenuation. The true scatter sinograms are extracted.

- Custom Code Development: A Python-based analytical model (e.g., based on the Single Scatter Simulation approximation) is developed. Its parameters are trained and optimized using the GAMOS-generated scatter data as the target.

- Validation: The trained custom model is applied to a complex, anthropomorphic phantom simulation in GAMOS. Its scatter estimate is compared against the true GAMOS scatter output for final validation of accuracy and speed gain.

Visualization

Title: MC Tool Selection Workflow for Attenuation Studies

Title: GATE Protocol for Attenuation Correction Validation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for MC-Based Attenuation Correction Research

| Item | Function & Relevance in Research |

|---|---|

| Digital Anthropomorphic Phantoms (e.g., 4D XCAT, NCAT) | Software-based models of human anatomy with time-varying dynamics. Provide voxelized "ground truth" geometry and tissue definitions essential for realistic simulation of photon attenuation. |

| Standardized Data Phantoms (e.g., NEMA NU-2/IEC) | Digital or physical specifications for performance evaluation. Allow benchmarking of attenuation correction methods across different research groups and software tools. |

| Tissue Composition & Attenuation Coefficient Libraries (e.g., ICRP 110, NIST databases) | Tabulated data on elemental composition, density, and photon cross-sections of biological tissues. Critical for assigning accurate material properties in MC simulations. |

| High-Performance Computing (HPC) Cluster or Cloud Credits | MC simulations are computationally intensive. Access to parallel computing resources is a prerequisite for producing statistically significant results in a reasonable time. |

| DICOM Toolkit (e.g., dcmtk, pydicom) | Software libraries for reading, writing, and converting medical imaging data. Enable importing clinical CT scans as attenuation maps and exporting simulation results for analysis. |

| Quantitative Image Analysis Software (e.g., 3D Slicer, AMIDE, custom MATLAB/Python scripts) | Tools for region-of-interest (ROI) analysis, image registration, and calculation of metrics (SUV, bias, noise) to quantitatively evaluate correction performance. |

This application note details the critical role of quantitative tracer uptake measurement in Positron Emission Tomography (PET) and Single-Photon Emission Computed Tomography (SPECT), framed within a broader thesis research program investigating advanced Monte Carlo simulation methods for tissue attenuation correction. Accurate quantification is foundational for validating and refining simulation-derived correction algorithms, which aim to minimize artifacts from photon attenuation, scatter, and partial volume effects, thereby enhancing the precision of pharmacokinetic and dosimetry studies in drug development.

Core Quantitative Metrics & Data

Table 1: Key Quantitative Metrics in PET/SPECT Tracer Uptake Analysis

| Metric | Acronym | Formula/Description | Primary Application |

|---|---|---|---|

| Standardized Uptake Value | SUV | (Tissue Activity Concentration [kBq/mL]) / (Injected Dose [kBq] / Body Weight [g]) | Semi-quantitative assessment of tracer concentration. |

| SUV normalized to Lean Body Mass | SUL | (Tissue Activity Concentration) / (Injected Dose / Lean Body Mass) | Reduces variability from body fat content. |

| Percent Injected Dose per Gram | %ID/g | (Tissue Activity Concentration [kBq/g] / Injected Dose [kBq]) * 100 | Common in preclinical studies for biodistribution. |

| Target-to-Background Ratio | TBR | (SUV or Mean Counts in Target Region) / (SUV or Mean Counts in Reference Background Region) | Enhances lesion contrast and detection. |

| Patlak Slope (Ki) | Ki | Derived from dynamic imaging; represents net influx rate constant. | Absolute quantification of metabolic rate or receptor density. |

Table 2: Impact of Monte Carlo-Based Attenuation Correction (MC-AC) on Quantification

| Study Type | Tracer | Without MC-AC (Mean SUV ± SD) | With MC-AC (Mean SUV ± SD) | % Improvement in Accuracy* | Key Finding |

|---|---|---|---|---|---|

| Thoracic Oncology (PET/CT) | 18F-FDG | 5.2 ± 1.8 | 8.1 ± 2.1 | +55.8% | MC-AC significantly corrected for low-density lung tissue attenuation. |

| Brain Dopamine Imaging (PET) | 18F-FDOPA | 1.5 ± 0.4 | 2.2 ± 0.5 | +46.7% | Improved striatum-to-cerebellum contrast, critical for kinetic modeling. |

| Myocardial Perfusion (SPECT) | 99mTc-Sestamibi | 2.0 ± 0.6 (Counts) | 3.1 ± 0.7 (Counts) | +55.0% | Reduced diaphragmatic and breast attenuation artifacts. |

| Preclinical Tumor Model (PET) | 89Zr-DFO-mAb | 4.3 ± 1.2 | 5.8 ± 1.4 | +34.9% | Enhanced accuracy of antibody biodistribution and tumor uptake. |

*Calculated relative to a ground truth phantom measurement or gold standard method.

Experimental Protocols

Protocol 1: Phantom Validation of Monte Carlo Attenuation Correction

Aim: To validate a Monte Carlo simulation package for tissue attenuation correction using a physical phantom with known activity concentrations. Materials: NEMA NU-2/IEC Body Phantom, 18F-FDG solution, PET/CT or PET/MRI scanner, Monte Carlo simulation software (e.g., GATE, SimSET, or custom code), analysis workstation (e.g., PMOD, MATLAB). Procedure:

- Phantom Preparation: Fill the phantom's spheres (simulating lesions) and background compartment with 18F-FDG solution at a known sphere-to-background ratio (e.g., 4:1). Accurately record activity concentrations and filling times.

- Image Acquisition: Position the phantom in the scanner. Acquire a CT scan for anatomic reference and attenuation map generation. Perform a PET list-mode acquisition for a duration sufficient to achieve >10 million true counts.

- Monte Carlo Simulation: Using the CT-derived attenuation map, simulate the PET acquisition of the phantom geometry using the Monte Carlo engine. Input the true activity distribution and simulate physical processes (attenuation, scatter, randoms, detector response).

- Image Reconstruction & Correction:

- Standard Method: Reconstruct the clinical PET data using the scanner's built-in correction algorithms (e.g., CT-based attenuation correction, model-based scatter correction).

- MC-AC Method: Use the simulated scatter and attenuation data from step 3 to inform a dedicated reconstruction or to correct the emission sinograms.

- Quantitative Analysis: Draw volumetric regions of interest (VOIs) on each sphere and the background. Record the measured SUVmean and SUVmax for each VOI in both the standard and MC-AC reconstructed images.

- Validation: Compare the measured SUV values from both methods against the known true activity concentration. Calculate recovery coefficients and quantification bias.

Protocol 2: In Vivo Preclinical Assessment of a Novel Tracer

Aim: To quantify the biodistribution and tumor uptake of a novel 89Zr-labeled therapeutic antibody in a murine xenograft model. Materials: Athymic nude mice with subcutaneously implanted tumor xenografts, 89Zr-DFO-conjugated antibody, microPET/CT scanner, dose calibrator, gamma counter, dissection tools. Procedure:

- Tracer Administration: Intravenously inject each mouse (n=5-8/group) with a precise activity of 89Zr-mAb (e.g., 100 µCi ± 5%). Record exact injection time and residual syringe activity.

- Serial PET/CT Imaging: Anesthetize mice and image at multiple time points post-injection (e.g., 1, 24, 48, 72, 96h). Acquire a CT scan followed by a static PET scan (e.g., 15-minute acquisition).

- Image Reconstruction & MC-AC: Reconstruct PET data with and without the thesis-developed Monte Carlo attenuation correction algorithm. Use a mouse-specific CT-derived density map as input for the MC simulation.

- Ex Vivo Biodistribution: After the final imaging time point, euthanize mice. Collect blood, major organs (heart, lungs, liver, spleen, kidneys), muscle, bone, and tumor. Weigh all samples and measure radioactivity in a gamma counter. Express results as %ID/g.

- Data Correlation: Correlate the in vivo PET-derived SUV or %ID/g values (from MC-AC images) with the ex vivo gamma counting results. Perform linear regression analysis to assess quantification accuracy.

Diagrams

Title: Monte Carlo Attenuation Correction Workflow

Title: From Tracer Injection to Quantified Image

The Scientist's Toolkit: Research Reagent & Material Solutions

Table 3: Essential Materials for Quantitative PET/SPECT Tracer Studies

| Item | Category | Function & Relevance to Quantification |

|---|---|---|

| NEMA/IEC Image Quality Phantom | Phantom | Gold-standard physical tool for validating scanner performance, recovery coefficients, and correction algorithms in a controlled geometry. |

| GATE/Geant4 or SimSET Software | Monte Carlo Simulation Platform | Enables realistic simulation of radiation transport through complex anatomies (from CT maps), generating correction factors for attenuation and scatter. |

| PMOD, Hermes, or MIM Software | Image Analysis Suite | Provides robust tools for image registration, VOI analysis, kinetic modeling (e.g., Patlak), and extraction of quantitative metrics (SUV, Ki). |

| Radiolabeled Reference Standards | Calibration Source | Sources with known activity and geometry are essential for calibrating the scanner and gamma counter, ensuring accurate activity concentration measurements. |

| CT Scan of Subject/Phantom | Anatomic & Density Map | Provides the essential tissue density map required as input for Monte Carlo simulations to model photon attenuation accurately. |

| High-Precision Dose Calibrator | Instrumentation | Critical for measuring the exact activity of the injected tracer dose, which is the denominator in all uptake calculations (SUV, %ID/g). |

| Isotope-Specific Calibration Factor | Software/Database | A scanner-specific conversion factor that translates detected counts into units of activity (Bq/mL), mandatory for cross-scanner comparison. |

In the broader thesis research on Monte Carlo simulation for tissue attenuation correction, this application note addresses a central practical challenge: the quantitative inaccuracy of BLI and FMI due to photon absorption and scattering, which is highly dependent on the depth and tissue composition of the light source. Accurate correction is paramount for translating photon counts into meaningful biological metrics (e.g., tumor burden, gene expression) in preclinical drug development.

Core Principles of Depth-Dependent Attenuation

Light propagation through living tissue is governed by the reduced scattering coefficient (μs') and the absorption coefficient (μa). The detected signal I(d, λ) from a source at depth d and wavelength λ is attenuated according to the modified Beer-Lambert law and complex scattering physics, which Monte Carlo methods simulate stochastically.

Table 1: Optical Properties of Common Tissues at Relevant Wavelengths

| Tissue Type | Wavelength (nm) | Absorption Coefficient μa (cm⁻¹) | Reduced Scattering Coefficient μs' (cm⁻¹) | Effective Attenuation Coefficient μeff (cm⁻¹) |

|---|---|---|---|---|

| Skin (Murine) | 600 (Red) | 0.2 - 0.4 | 12 - 16 | 1.0 - 1.4 |

| Muscle (Murine) | 600 (Red) | 0.3 - 0.5 | 14 - 18 | 1.1 - 1.5 |

| Brain (Murine) | 600 (Red) | 0.2 - 0.3 | 10 - 14 | 0.9 - 1.2 |

| Liver (Murine) | 600 (Red) | 0.8 - 1.5 | 8 - 12 | 1.4 - 2.2 |

| Lung (Murine) | 600 (Red) | 0.4 - 0.7 | 16 - 22 | 1.3 - 1.8 |

| Typical Tumor | 600 (Red) | 0.3 - 0.6 | 13 - 20 | 1.2 - 1.7 |

| All Tissues | 700 (NIR) | Lower | Slightly Lower | Significantly Lower |

Data synthesized from recent literature (2023-2024) on preclinical tissue optics. NIR (Near-Infrared) exhibits lower attenuation, favoring fluorophores like ICG.

Correction Methodologies & Protocols

Protocol 1: Multi-Spectral Imaging for Analytical Correction

This method uses light at multiple wavelengths to solve for depth and source intensity.

- Animal Preparation: Implant tumor cells expressing both a bioluminescent (e.g., firefly luciferase) and a near-infrared fluorescent (e.g., iRFP720) reporter.

- Image Acquisition:

- Administer D-luciferin (150 mg/kg, i.p.) and acquire a bioluminescent image sequence (typically 1-5 min exposure, binning 4-8).

- Without moving the animal, acquire multi-wavelength fluorescence excitations (e.g., 675 nm, 745 nm) for the NIR fluorophore with appropriate emission filters.

- Acquiate a white-light surface image.

- Data Processing:

- Use the ratio of fluorescence signals at different wavelengths, which have known but differential attenuation profiles, to compute an effective depth (d) of the source via a pre-computed Monte Carlo lookup table.

- Apply the depth-specific attenuation correction factor (derived from μeff for the bioluminescence wavelength) to the raw BLI photon counts.

- Output a corrected radiance value (p/s/cm²/sr).

Protocol 2: Monte Carlo Simulation-Based 3D Reconstruction

A more computationally intensive method that iteratively matches simulation to data.

- Pre-computation: Generate a vast database of Monte Carlo simulations for point sources at various depths (0-10 mm, 0.1 mm steps) and lateral positions within a digital mouse model of known tissue optical properties.

- Experimental Data: Acquire multi-projection bioluminescence images (e.g., dorsal, ventral, lateral) using a highly sensitive 3D optical imager.

- Reconstruction:

- Segment the animal CT scan to define tissue regions (skin, muscle, lung, etc.).

- Assign literature-based μa and μs' to each region.

- Use an iterative algorithm (e.g., Bayesian or Gradient Descent) to find the 3D source distribution whose simulated photon distribution across the surface best matches the acquired multi-projection data.

- The output is a 3D map of corrected source intensity (in units of photons/s/voxel).

Table 2: Comparison of Attenuation Correction Methods

| Method | Key Principle | Required Data Input | Output | Advantages | Limitations |

|---|---|---|---|---|---|

| Multi-Spectral (Analytical) | Spectral unmixing & diffusion theory | BLI + Multi-wavelength FMI | 2D Corrected Radiance & Estimated Depth | Faster, commercially available software | Assumes homogeneous tissue; less accurate for deep, complex sources |

| Monte Carlo 3D Reconstruction | Stochastic photon transport simulation | Multi-projection BLI + Co-registered CT/MRI | 3D Source Distribution (Quantitative) | Anatomically accurate; gold standard for quantification | Computationally expensive; requires multimodal imaging & digital atlas |

| Hybrid Simplified SP3 Method | Solves simplified radiative transport equation | Single-view BLI + Approximate Mouse Contour | Approximate 3D Correction | Good balance of speed and accuracy | Less precise than full Monte Carlo |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Attenuation-Corrected BLI/FMI

| Item | Function | Example/Note |

|---|---|---|

| D-Luciferin (Potassium Salt) | Substrate for firefly luciferase, generates ~560-620 nm bioluminescence. | Standard for BLI. Dose: 150 mg/kg i.p. in PBS. Stable at -20°C. |

| Coelenterazine (Native) | Substrate for Renilla or Gaussia luciferase, produces ~480 nm blue light. | Used for dual-reporter assays. Rapid kinetics, inject just before imaging. |

| NIR Fluorophores (e.g., IRDye 800CW, ICG-analogs) | Fluorescent probes excitable in NIR window (680-800 nm) for multi-spectral depth sensing. | Low tissue absorption; enables Protocol 1. Conjugate to targeting agents. |

| Reporter Cell Lines (Dual BLI/FMI) | Cells stably expressing both a luciferase and a NIR fluorescent protein. | Essential for co-registered, multi-spectral studies. Validate expression stability. |

| Matrigel or PBS/Media Mix | Vehicle for consistent subcutaneous or orthotopic cell implantation. | Affects initial dispersal and local optics. Use consistent volume/concentration. |

| Isoflurane/Oxygen Anesthesia System | Maintains animal immobilization and physiological stability during prolonged imaging. | Vaporizer (3-4% induction, 1-2% maintenance). Anesthetic affects tissue oxygenation (μa). |

| Liquid Phantom Kit (Lipid-based) | Calibration standards with known μa and μs' to validate imager performance and correction algorithms. | e.g., Intralipid dilutions with ink. Critical for protocol standardization. |

| Digital Mouse Atlas (e.g., Digimouse) | 3D volumetric model with segmented tissues. | Provides anatomical prior and optical property maps for Monte Carlo simulation (Protocol 2). |

Visualized Workflows & Pathways

Title: Multi-Spectral Depth Correction Workflow

Title: Monte Carlo 3D Reconstruction Process

Title: Thesis Context: MC Simulation Applications

Solving Computational Challenges: Accuracy, Speed, and Convergence in MC Simulations

Introduction Within Monte Carlo (MC) simulation research for tissue attenuation correction in quantitative PET and SPECT imaging, the central challenge is the trade-off between statistical precision (noise) and computational burden. This document provides application notes and protocols for optimizing this balance, framed within a broader thesis on developing accelerated, clinically viable MC-based correction methods.

Theoretical Framework Monte Carlo methods estimate photon transport through biological tissue by simulating individual particle histories. The relative standard error (RSE) of the estimated attenuation correction factor is inversely proportional to the square root of the number of simulated photon histories (N): RSE ∝ 1/√N. Halving the RSE requires quadrupling N, leading to a nonlinear increase in computational time (T). The relationship is: T ∝ N. The optimal balance is application-dependent, dictated by the required precision for downstream pharmacokinetic or dosimetry analyses.

Experimental Protocols

Protocol 1: Benchmarking Computational Time vs. Noise for a Standard Phantom

- Objective: To empirically establish the T = f(N) and Noise = g(N) relationships for a baseline geometry.

- Materials: See "Research Reagent Solutions."

- Methodology:

- Define a digital reference phantom (e.g., XCAT anthropomorphic model) with known tissue attenuation maps (μ-maps).

- Using the GATE MC platform, simulate the transport of 10^6, 10^7, 5x10^7, 10^8, and 5x10^8 photon histories from a point source within the phantom. Use an energy spectrum relevant to your isotope (e.g., 511 keV for F-18).

- Record the wall-clock time for each simulation on a defined computational setup (specify CPU/GPU type, cores, memory).

- For each simulation output (sinogram), reconstruct the image using a standard algorithm (e.g., OSEM).

- In a uniform region of interest (ROI), calculate the noise as the percentage coefficient of variation (%CV = [standard deviation / mean] * 100). In a hot lesion ROI, calculate the bias relative to the "true" activity defined in the simulation input.

- Analysis: Plot T vs. N and %CV vs. N. Fit curves to confirm theoretical relationships.

Protocol 2: Evaluating Variance Reduction Technique (VRT) Efficacy

- Objective: To quantify the change in the noise-time trade-off curve when applying VRTs.

- Methodology:

- Using the same phantom setup as Protocol 1, implement a VRT such as photon splitting with Russian Roulette or an importance sampling scheme based on the pre-computed μ-map.

- Run simulations for the same number of effective histories (accounting for the VRT's weighting) as in Protocol 1.

- Record computational time, ensuring to include any overhead from VRT setup.

- Calculate the noise and bias in the reconstructed images as in Protocol 1.

- Compute the Figure of Merit (FoM): FoM = 1 / (%CV² * T). A higher FoM indicates a more efficient method.

- Analysis: Compare the FoM across different VRTs and the baseline simulation. A successful VRT shifts the noise-time curve downward (less noise for the same time).

Data Presentation

Table 1: Baseline Simulation Results (No VRT)

| Photon Histories (N) | Computational Time (T), hours | Noise in ROI (%CV) | Bias in Lesion (%) |

|---|---|---|---|

| 1.00E+06 | 0.25 | 25.4 | -12.7 |

| 1.00E+07 | 2.1 | 8.1 | -4.3 |

| 5.00E+07 | 10.5 | 3.6 | -1.9 |

| 1.00E+08 | 21.0 | 2.5 | -1.0 |

| 5.00E+08 | 104.5 | 1.1 | -0.5 |

Table 2: Comparison of Variance Reduction Techniques (for ~2.5% CV Target)

| Simulation Method | Photon Histories (N) | Time to Target (hrs) | Figure of Merit (FoM) |

|---|---|---|---|

| Baseline (No VRT) | 1.00E+08 | 21.0 | 1.00 (Ref) |

| Photon Splitting (5x) | 2.00E+07 | 5.5 | 3.82 |

| Importance Sampling | 5.00E+07 | 15.2 | 1.38 |

Visualizations

Trade-off Between N, Time, and Noise in MC

Workflow for Optimizing MC ACF Simulations

The Scientist's Toolkit: Research Reagent Solutions

| Item/Reagent | Function in MC Attenuation Research |

|---|---|

| GATE/Geant4 | Open-source MC simulation platform for modeling particle transport in complex geometries like human anatomy. |

| XCAT/NURBS Digital Phantom | Provides a realistic, customizable model of human anatomy with defined tissue types and attenuation coefficients. |

| Cluster/GPU Computing Resources | Essential for parallelizing photon history simulations to reduce wall-clock time for large N. |

| DICOM CT/MR Patient Data | Source for generating patient-specific attenuation maps (μ-maps) as simulation input, improving clinical relevance. |

| ROI Analysis Software (e.g., 3D Slicer) | For quantifying noise, bias, and recovery coefficients in reconstructed simulation output images. |

| Variance Reduction Scripts | Custom algorithms (e.g., splitting, forced detection) implemented within the MC code to improve sampling efficiency. |

Application Notes

In Monte Carlo (MC) simulation for tissue attenuation correction in quantitative imaging (e.g., PET, SPECT), the accuracy of the simulated radiation transport is paramount. Two foundational, often underestimated, pitfalls directly compromise the validity of the correction factors generated: (1) reliance on inaccurate or outdated photon/electron interaction cross-section data, and (2) the use of oversimplified geometrical models of patient anatomy. Within the thesis on advancing MC methods for clinical translation, addressing these pitfalls is critical for moving from proof-of-concept to robust, regulatory-grade correction techniques.

Pitfall 1: Inaccurate Cross-Section Data MC simulations rely on databases (e.g., NIST, EPDL97) for probabilities of photoelectric absorption, Compton scattering, and pair production. Using default, simplified, or outdated libraries introduces systematic biases in estimated attenuation, especially at low energies (<100 keV) or for high-Z materials (e.g., bone, iodinated contrast). Recent benchmarks show discrepancies of up to 5-8% in dose deposition and 3-5% in detected photon flux when comparing legacy data (XCOM) against modern, high-fidelity evaluations (EPDL2017, Geant4-DNA libraries).

Pitfall 2: Oversimplified Geometry Representing complex human anatomy (e.g., lung parenchyma, trabecular bone) as homogeneous volumes with uniform density neglects sub-voxel heterogeneities. This "voxel-averaging" leads to significant errors in scatter estimation and path-length calculations. Studies indicate that using a stylized, block-based phantom versus a patient-specific, voxelized CT-derived geometry can alter calculated attenuation correction factors by 10-15% in thoracic imaging and over 20% in regions with metallic implants.

Table 1: Impact of Cross-Section Data Source on Simulated Attenuation Coefficient (μ) in Water

| Energy (keV) | NIST XCOM μ (cm⁻¹) | EPDL2017 μ (cm⁻¹) | Percent Difference (%) | Clinical Relevance |

|---|---|---|---|---|

| 30 | 0.151 | 0.158 | +4.6% | Low-energy SPECT |

| 70 | 0.195 | 0.200 | +2.6% | PET, CT |

| 140 | 0.150 | 0.151 | +0.7% | Tc-99m SPECT |

| 511 | 0.096 | 0.096 | +0.1% | PET |

Table 2: Error in Attenuation Correction Factor (ACF) from Geometry Simplification

| Anatomical Region | Homogeneous Model ACF | Heterogeneous Model ACF | Absolute Error in ACF | Key Omitted Structure |

|---|---|---|---|---|

| Lung (mid-field) | 0.45 | 0.52 | +0.07 | Vessel branching |

| Skull base | 1.85 | 2.15 | +0.30 | Trabecular bone |

| Abdomen (liver) | 1.22 | 1.18 | -0.04 | Portal vasculature |

| Hip (w/ implant) | 3.10 | 4.25 | +1.15 | Implant microstructure |

Experimental Protocols

Protocol 1: Benchmarking Cross-Section Library Performance

Objective: To quantify the dosimetric and detection error introduced by different photon cross-section libraries in a controlled MC simulation.

Materials: Geant4 (v11.1) or GATE (v9.3) MC toolkit; Reference phantoms (e.g., ICRU sphere); Cross-section libraries: XCOM, EPDL2017, Livermore; High-performance computing cluster.

Methodology:

- Setup: Implement a simple sphere phantom (10 cm radius, soft tissue composition) in the MC code. Place a point isotropic source of mono-energetic photons at the center.

- Simulation A: Use the default (often XCOM-derived) cross-section library. Simulate 10⁹ primary photon histories. Track:

- Energy deposited in the phantom (MeV).

- Number and energy spectrum of photons escaping the phantom surface within a defined solid angle.

- Simulation B: Repeat the simulation using a high-fidelity library (EPDL2017). Ensure all other physics processes (e.g., electron tracking, cut-offs) are identical.

- Analysis: Calculate the percentage difference in (a) total energy deposition and (b) escape flux between Simulation A and B for key energies (30, 70, 140, 511 keV). Perform a chi-squared test to assess statistical significance of discrepancies (p < 0.01).

Protocol 2: Assessing the Impact of Geometrical Fidelity

Objective: To evaluate the error in computed attenuation correction factors (ACFs) due to anatomical simplification.

Materials: Patient CT dataset (DICOM); 3D Slicer or MITK software; Voxelized phantom creation script; MC simulation software (e.g., GATE); High-resolution anatomical atlas phantom (e.g, XCAT).

Methodology:

- Model Creation:

- High-Fidelity Model (Ground Truth): Segment a patient CT dataset (e.g., thorax) into 5-6 tissue types (lung, soft tissue, bone, cartilage, vessel). Assign material properties and densities based on CT Hounsfield Units. Create a voxelized phantom (~1 mm³ voxels).

- Simplified Model: From the same CT, create a homogenized version. For example, define the entire lung volume as a single material with averaged density. Smooth boundaries.

- Simulation: For each model, simulate a uniform activity distribution within the lungs. Use identical, high-fidelity cross-section data and physics lists.

- Run the MC simulation to generate projection data (sinograms) for a 360° acquisition.

- ACF Calculation: From the simulated projections, compute the attenuation correction factors using standard methods (e.g., calculated attenuation correction based on the simulated "CT" model).

- Validation: Compare ACF sinograms and regional mean ACF values (e.g., in lung, near spine) between the two models. Calculate the root-mean-square error (RMSE) and max pixel error.

Diagram Title: Protocol Workflow for Pitfall Quantification

Diagram Title: Logical Flow from Pitfalls to Research Impact

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Digital Tools for Mitigating Pitfalls

| Item Name | Category | Function & Rationale |

|---|---|---|

| Geant4 (v11.1+) | MC Software Toolkit | Provides modular physics lists (e.g., G4EmLivermorePhysics) with updated, validated cross-section data down to low energies. |

| GATE/STIR Platform | Integrated Simulation & Reconstruction | Enables direct linkage of voxelized CT/MRI anatomical models with MC physics for geometry-aware simulations. |

| XCAT 4.0 Phantom | Digital Anatomical Model | Offers parameterized, high-resolution (sub-mm) models of human anatomy with natural tissue heterogeneity, superior to simple ellipsoids. |

| NIST EPDL2017 Library | Cross-Section Database | The current standard for evaluated photon interaction data; essential for benchmarking and high-accuracy simulations. |

DICOM to Voxelized Phantom Converter (e.g., dcm2niiX + custom scripts) |

Data Processing Tool | Transforms clinical CT DICOM images into simulation-ready voxelized phantoms with material labeling via HU thresholds. |

| High-Performance Computing (HPC) Cluster | Computational Resource | Necessary for running the billions of particle histories required for statistically significant, high-fidelity simulations. |